Accelerator

State of Cloud HPC (High Performance Computing) in 2024

High-performance computing (HPC), also known as supercomputing, can operate in cloud-based environments or on-site. Given that HPC tasks are often data-intensive, which can drive up expenses and demand substantial computational resources, cloud computing offers a solution by reducing initial setup costs and supplying the necessary computational power through the service provider.

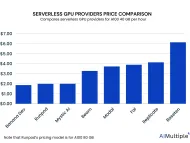

Top 10 Serverless GPUs: A comprehensive vendor selection in '24

Large language models (LLMs) like chatGPT has been a hot topic for business world since last year. Thus, the number of these models have drastically increased. Yet, one major LLM challenge prevents more enterprises adopting it, system costs for developing these models.

Top 10 Cloud GPU Providers in 2024

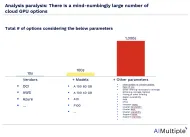

GPU procurement complexity has been increasing with more providers offering GPU cloud options. AIMultiple analyzed GPU cloud providers across most relevant dimensions to facilitate cloud GPU procurement. While listing pros and cons for each provider, we relied on user reviews on G2, other online reviews as well as our assessment.

Cloud GPUs for Deep Learning: Availability& Price / Performance

If you are flexible about the GPU model, identify the most cost-effective cloud GPU If you prefer a specific model (e.g. A100), identify the GPU cloud providers offering it. If undecided between on-prem and the cloud, explore whether to buy or rent GPUs on the cloud.

What is Accelerated Computing? Benefits & Use Cases in 2024

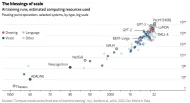

Both the number of parameters and size of models (see Figure 1) that refers to width and depth of the neural networks is skyrocketing. Richer data means better predictive capabilities for businesses to anticipate customer preferences, trends, fraud, the climate – anything, really. But to analyze the data more effectively, companies need more computing power.

Top 10 AI Chip Makers of 2024: In-depth Guide

As the figure above illustrates, the number of parameters (consequently the width and depth) of the neural networks and therefore the model size is increasing. To build better deep learning models and power generative AI applications, organizations require increased computing power and memory bandwidth.

AI Chips: A Guide to Cost-efficient AI Training & Inference in 2024

In last decade, machine learning, especially deep neural networks have played a critical role in the emergence of commercial AI applications. Deep neural networks were successfully implemented in early 2010s thanks to the increased computational capacity of modern computing hardware. AI hardware is a new generation of hardware custom built for machine learning applications.