Machine Learning Accuracy: True-False Positive/Negative [2024]

![Machine Learning Accuracy: True-False Positive/Negative [2024]](https://research.aimultiple.com/wp-content/uploads/2019/07/positive-negative-true-false-matrix-382x139.png.webp)

![Machine Learning Accuracy: True-False Positive/Negative [2024]](https://research.aimultiple.com/wp-content/uploads/2019/07/positive-negative-true-false-matrix-824x300.png.webp)

There are various theoretical approaches to measuring accuracy* of competing machine learning models however, in most commercial applications, you simply need to assign a business value to 4 types of results: true positives, true negatives, false positives and false negatives. By multiplying number of results in each bucket with the associated business values, you will ensure that you use the best model available.

Further complicating this situation is the confidence vales provided by the model. Almost all machine learning models can be built to provide a level of confidence for their answer. A high level approach to using this value in accuracy* measurement is to multiply it with the results, essentially rewarding the model for providing high confidence values for its correct assessments.

However, more sophisticated approaches are possible. For example, if all low confidence predictions will be manually reviewed, then assigning a manual labor cost to low confidence predictions and taking their results out of the model accuracy* measurement is a more accurate approximation for business value generated from the model.

Let us take you through these 3 steps to computing machine learning model accuracy*. And just to clarify, here we use the word accuracy to mean the business value of the model. A more detailed discussion of why this may not be a great term and why we are using it is in the footnote

What are the possible results of a model?

As demonstrated in the featured image, a model’s individual predictions can either be true or false meaning the model is right or wrong. The actual value of the data point is also important. You may wonder why we need a model that makes predictions if we know the actual values. Here, we are referring to the model’s performance on the training data, data where we know the answers. Actual value of the data points can either be the values we are trying to identify in the dataset (positives) or other values (negatives). Therefore the 4 possible results of a model’s individual predictions are:

- True positive: The prediction is correct and the actual value is positive (i.e. this value is one of the values that the model was trying to identify. In case of a model searching to identify customers who are likely to buy the product, this data point represents a potential buyer)

- False positive: The prediction is wrong and the actual value is positive

- True negative: The prediction is correct and the actual value is negative (i.e. this value is not one of the values that the model was trying to identify. In case of a model searching to identify customers who are likely to buy the product, this data point represents a customer who is not interested in buying)

- False negative: The prediction is wrong and the actual value is negative

How to Assign Business Values to Outcomes

All of the 4 outcomes listed above have different business values. Let’s continue with the analogy of the model that is trying to identify customers who are potential buyers. The potential business values of these variables are:

- True positive: The contribution margin (i.e. The value of the sale after all variable costs). Thanks to the model, we identified the right customer and made the sale, therefore all incremental value of the sale should be attributed to the model.

- False positive: Negative of the contribution margin. This could have been a sale but the model misclassified it so the sale did not happen. Because of the model we are not able to close this sale.

- True negative: No value. No action was taken and no opportunity was missed so these are neutral data points

- False negative: The marketing costs to reach the customer and the cost of annoyance caused to the customer as a result of reaching her with an offer she is not interested in. The latter cost is most of the time overlooked. However, it is important as by unnecessarily reaching customers, we cause some to unsubscribe or not pay attention to our messages, making future sales less likely.

How to Use Business Value of Outcomes to Calculate Model Value

By cross-multiplying number of results in each bucket with the values, we arrive at the value of the model.

How to Refine Business Value Estimation with Confidence levels

Like us, models can also assess their likelihood to be right. This value is almost as important as the results themselves as your company can refine its manual check/audit mechanism or the business decisions it takes based on the model with this value and further improve output. The refinement due to confidence levels depend on whether that model is solving a problem where humans outperform the model:

- Consider introducing additional controls for low confidence cases in predictions where humans outperform the model at a reasonable cost. It may sound counter-intutive to be using models in areas where humans are better. However, due to cost considerations, models perform tasks (such as optical character recognition or document data extraction) where humans are more capable. In these cases, by using confidence level as an input to manual controls can help improve results. For example, if all of a models false predictions have low confidence, a manual check of low confidence outputs would enable your company to make perfect decisions.

- Consider not following model predictions where model’s confidence level is low if

- humans can not outperform the model (i.e. in big data solution areas like recommendation personalization) or

- if human time cost is not worth the value generated by a correct decision.

An example where model’s low confidence predictions are disregarded is identification of customers for targeted campaigns. The cost of sending a campaign message to a customer who may not buy the product is relatively low while the value from a sale is high. Therefore, companies may want to send offers to customers even if the model predicts, with low confidence, that they will not buy the product.

In this article, we focused on comparing different machine learning models and the value they generate for your business. Based on the 4 types of results of a model (e.g. true positives etc.), other ratios are derived by statisticans to discuss model quality. The most common ones are precision and recall, sensitivity and specifity and F1 score. Feel free to read the linked Wikipedia articles if you are soon likely to find yourself in a meeting where technical personnel are around to discuss model results. However, none of those metrics are likely to be an accurate assessment of a model in terms of its business value as they do not take into account the specific business value of each result.

Finally, please note that here we focused on the model results only. The other important aspect of assessing a model’s performance is creating the training data that the models will run on. As you can expect, it needs to be accurate and be large and varied enough to represent the future values that the model will encounter.

Hope our approach to machine learning model assessment was clear and helpful to you. Before assessing models, it makes sense to use the best tools to build those models. Check out our comprehensive ranking of machine learning software and data science/machine learning consultants to make sure that you use the right software and advisors to support your business.

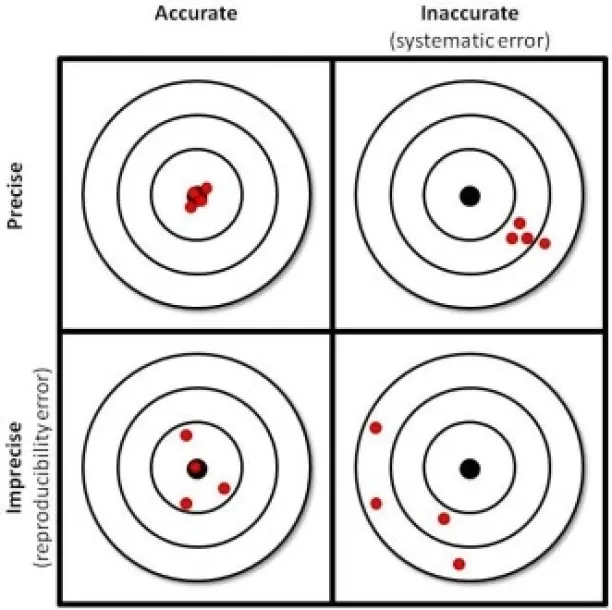

* We have used accuracy to mean business value that the model can generate. This can be confusing for those with background in statistics as accuracy and precision are clearly defined terms. Accuracy refers to the closeness of a measured value to a standard or known value. Precision refers to the closeness of two or more measurements to each other. The picture below demonstrates this clearly. We refer to business value of the machine learning model as accuracy since this is a widely searched term on google and answers seem to indicate that users mean business value but not accuracy in the statistically sense of the word.

Cem is the principal analyst at AIMultiple since 2017. AIMultiple informs hundreds of thousands of businesses (as per Similarweb) including 60% of Fortune 500 every month.

Cem's work has been cited by leading global publications including Business Insider, Forbes, Washington Post, global firms like Deloitte, HPE, NGOs like World Economic Forum and supranational organizations like European Commission. You can see more reputable companies and media that referenced AIMultiple.

Throughout his career, Cem served as a tech consultant, tech buyer and tech entrepreneur. He advised enterprises on their technology decisions at McKinsey & Company and Altman Solon for more than a decade. He also published a McKinsey report on digitalization.

He led technology strategy and procurement of a telco while reporting to the CEO. He has also led commercial growth of deep tech company Hypatos that reached a 7 digit annual recurring revenue and a 9 digit valuation from 0 within 2 years. Cem's work in Hypatos was covered by leading technology publications like TechCrunch and Business Insider.

Cem regularly speaks at international technology conferences. He graduated from Bogazici University as a computer engineer and holds an MBA from Columbia Business School.

Sources:

AIMultiple.com Traffic Analytics, Ranking & Audience, Similarweb.

Why Microsoft, IBM, and Google Are Ramping up Efforts on AI Ethics, Business Insider.

Microsoft invests $1 billion in OpenAI to pursue artificial intelligence that’s smarter than we are, Washington Post.

Data management barriers to AI success, Deloitte.

Empowering AI Leadership: AI C-Suite Toolkit, World Economic Forum.

Science, Research and Innovation Performance of the EU, European Commission.

Public-sector digitization: The trillion-dollar challenge, McKinsey & Company.

Hypatos gets $11.8M for a deep learning approach to document processing, TechCrunch.

We got an exclusive look at the pitch deck AI startup Hypatos used to raise $11 million, Business Insider.

To stay up-to-date on B2B tech & accelerate your enterprise:

Follow onNext to Read

How to Apply Data Mining in Business Analytics in '24

OCR in 2024: Benchmarking Text Extraction/Capture Accuracy

AI Audit in 2024: Guide to faster & more accurate audits

I think you switched false positives and false negatives in your article. Clearly false positives are the predictions that lead you on a wild goose chase as the model makes you believe that an uninterested individual is a potential customer thus making the business spend money on futile marketing.

Hi Joy. What have we said?

Comments

Your email address will not be published. All fields are required.